- Veröffentlichung:

01.10.2025 - Lesezeit: 9 Minuten

From the need-to-know to the need-to-share principle: data availability instead of information silos

Need-to-share: from restrictive control to scalable collaboration

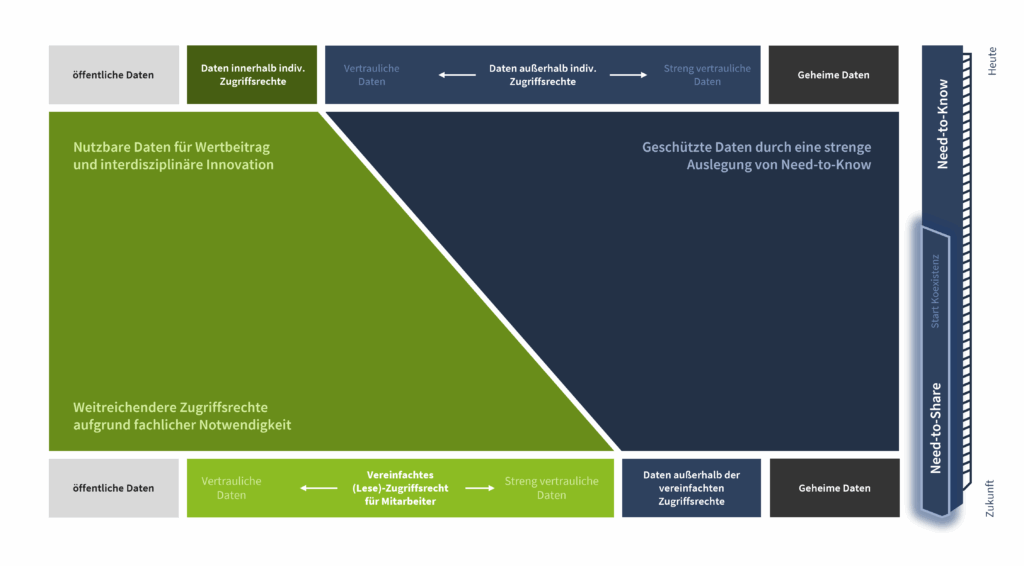

The classic need-to-know principle guaranteed data protection and compliance for many years. It is now clear that innovation, speed and efficiency suffer if only a few experts have access to the most important information. Companies that want to grow and remain competitive need to rethink their approach. This does not mean blind opening, but a targeted, risk- and benefit-based expansion of data access. A scalable release process that combines transparency and security is becoming the key factor. This creates a new paradigm in which data can be used dynamically and flexibly to promote value creation, AI applications and innovation without losing sight of regulatory requirements.

The importance of data transparency - a success factor for innovation and corporate resilience

Without transparency, the potential of even the highest quality data often remains untapped. A central data catalog that semantically describes business objects, makes protection requirement classifications visible and comprehensibly maps user rights brings light into the darkness. This not only enables the automated release of data and a rapid response to new business requirements, but also forms the basis for AI applications, data-based governance and efficient collaboration, even across departments or with external partners. Employees benefit from clear processes; managers gain a new basis for managing value streams as well as control over compliance and data protection

Challenges and solutions of the need-to-

know principle in today's data practice

The introduction of a central, semantically enriched data catalog (Business Object Repository) forms the basis for a modern, data-driven organization. All relevant business objects are classified together with their protection requirement levels and need-to-share capability and are clearly referenced in the catalog. Mapping to physical schemas not only creates clarity, but also facilitates the reuse of protection requirement classifications and promotes the automation of data-related processes. This creates a single point of truth, which is essential both for automated approvals and for the AI-compatible evaluability of internal company databases.

Structured responsibilities and clear authorization models are essential for scalable data release. By analyzing and consolidating risk and approval processes across the organization, clear roles, transparent decision-making paths and uniform review mechanisms are created. Overhistorically grown bottlenecks, myths about personal liability and exaggerated interpretations of regulatory requirements are thus dissolved and smooth cooperation between IT, specialist departments and corporate security is specifically promoted.

The data object-based approach enables protection requirement classifications to be stored directly and reusably on the data object. A data-oriented, tool-supported release process accelerates the release of non-critical data in particular through automation, while comprehensive contextual information is provided for sensitive data for a well-founded risk-benefit assessment. This creates an efficient, comprehensible structure that can be used across all applications, which is essential for dynamic AI and analytics scenarios in particular.

The successful projects show that, in addition to regulation and technology, the acceptance and understanding of employees in particular must be transformed. Clear lines of communication, transparent guidelines and company-wide training courses help to reduce reservations about new approval concepts. Here it is important to focus on the central change in data culture. Data should no longer be seen as a “treasure” worthy of protection by a specific department, but as a collective asset of the entire organization. Close coordination between IT, specialist departments and corporate security ensures a sustainable transfer of knowledge and establishes the cooperative handling of data as a success factor.

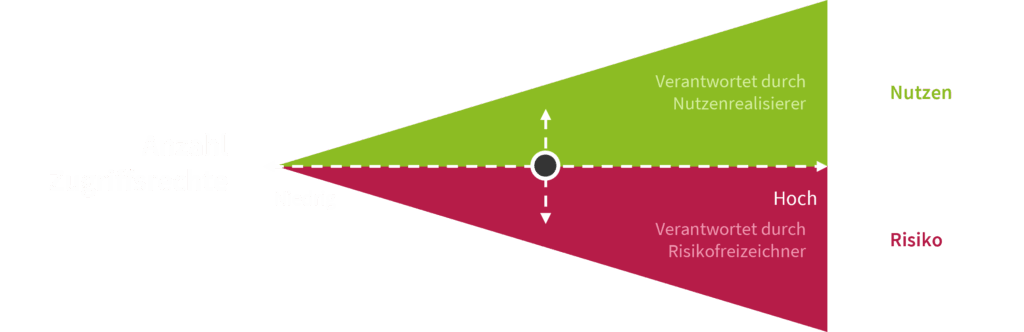

Effective data approval means more than just avoiding risks: only when the approval process explicitly integrates opportunity assessments and systematically weighs up business benefits against potential risks does the basis for a truly value-adding decision-making culture emerge. The direct comparison of risks and added value in the approval document enables quick, comprehensible decisions and proves that company-wide need-to-share capability and compliance are by no means contradictory, but rather pave the way for scalable data innovations.

Need-to-share as a data-oriented, scalable concept

The need-to-share principle is based on a data object-based model: protection requirement classifications are stored directly and reusably on business objects and are available for a wide range of applications. A semantically enriched data catalog not only enables automated access decisions, but also provides a company-wide data ontology. This transparency makes it easier to assess the opportunities and risks of each release project and enables the immediate, context-sensitive release of non-critical data. For sensitive data, the catalog supports a fast, comprehensible decision-making process that weighs up security and added value.

Scalability & agility through need-to-share

Companies that consistently implement need-to-share achieve a drastic shortening of approval cycles and a leap in efficiency in the development of digital products. Pilot projects prove that rights are extended from individuals to groups of people, the approval process becomes more complex, but at the same time faster and more transparent. Central approval processes, for example via a business object repository, reduce duplication of work, provide quickly available, compliant data and enable flexible expansion to other use cases. This allows the organization to quickly provide AI training data, generate new insights and launch data-based innovations in the shortest possible time.

Need-to-share to need-to-know Practical example "Chattable development data":

From the idea to the blueprint

Governance & regulation in the need-to-share approach: efficiency through clearly defined structures

Change communication and mindset transformation: the success factor for the rollout of Need-To-Share

Conclusion

Your contact person

Arrange a non-binding initial consultation now

- Expertise: Transformation of fragmented data landscapes into scalable, interconnected ecosystems

- Transparency: Establishing comprehensible structures for control, approval and compliance in data management

- Efficiency: Acceleration of value-added processes through automation and optimized data provision

- Sustainability: anchoring a company-wide data mindset for long-term innovation and competitiveness

TISAX and ISO certification for the Munich office only

Your message