- Veröffentlichung:

06.03.2026 - Lesezeit: 13 Minuten

Reduce AI carbon footprint: Optimize energy, CO₂ and costs of your AI landscape sustainably

Why the AI carbon footprint is now a top priority:

Artificial intelligence (AI) is changing the economy – but its resource consumption is growing rapidly. Training and operating models require enormous amounts of computing power, electricity and water. Hyperscalers are investing heavily in strategic energy capacities. Many companies underestimate the extent to which AI has become a material cost, energy and CO₂ driver.

Executive Summary -

AI Carbon Footprint at a glance

- High costs & CO₂ load: AI models generate significant energy and emission costs, especially in the ongoing operation of a trained AI model (inference). Costs and CO₂ footprint grow proportionally with use.

- Regulatory pressure is growing: CSRD, EU taxonomy, ESG ratings and sustainability reports demand transparency regarding the energy consumption of digital systems - including AI models, data centers and cloud workloads.

- Optimization achievable: Model efficiency, carbon-aware scheduling, token and edge optimization can drastically reduce energy consumption, electricity costs, CO₂ emissions and water consumption.

- Economic added value: Companies can immediately reduce cloud spend, optimize hardware utilization, use resources more efficiently and build sustainable AI architectures for future scaling.

Companies often do not know which AI models consume how much power, how high the CO₂ load per model is or how many resources inference and training runs consume. Without transparency, optimization and reporting are hardly possible.

Many models run larger or more complex than necessary. Standard training, unoptimized parameters, outdated pipelines and inefficient queries lead to massively increased energy consumption.

GPU/TPU instances are sometimes operated permanently, even though workloads fluctuate or can be shifted in terms of time/location. The result: unnecessary costs due to idle compute.

Digital systems must now also be included in sustainability reports. AI consumption, energy sources, cooling requirements and Scope 2/3 emissions are subject to auditing and reporting. Water consumption and cooling costs are part of this reporting.

The CO₂ value of an AI model depends heavily on the country in which it is produced and how climate-friendly the local electricity mix is. Many organizations do not take this into account.

Why act now? Impact of the AI carbon footprint on companies

The AI carbon footprint not only influences environmental and sustainability metrics, but also has a direct impact on cost structures, energy consumption, cloud usage and regulatory requirements. Companies need to understand how AI systems affect their operational performance, ESG strategy and technological competitiveness. Those who take active control now will reduce costs, emissions and dependencies – and create a resilient basis for scalable AI strategies.

- New technical obligations & data infrastructure

- High electricity & water consumption

- ESG reporting obligations

- Sustainability requirements of customers & investors

- Pressure to make AI transformation cost-efficient and climate-friendly

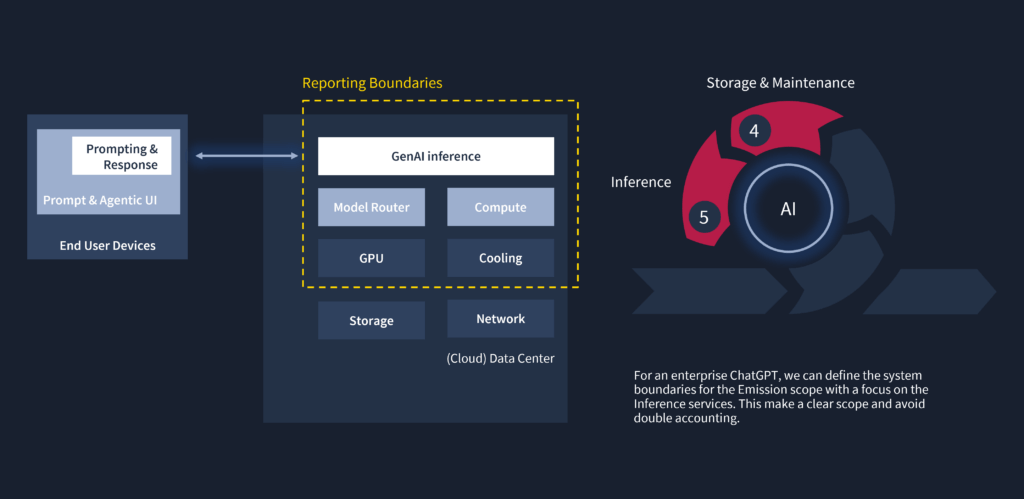

The AI lifecycle and its influence on the carbon footprint

The AI carbon footprint is not only generated during the training of models, but over the entire lifecycle of AI systems. A holistic view of the AI lifecycle is therefore crucial in order to fully understand, correctly report and effectively reduce emissions.

The AI lifecycle typically comprises the following phases:

Data Acquisition & Preparation

Model Training

Inference

Storage & Maintenance

Hardware Manufacturing

Hardware End of Life

Key finding:

For most organizations, AI-related emissions fall predominantly into Scope 3, are less visible but fully relevant for CSRD, GHG Protocol and ESG reporting. The inference phase in particular has a long-term scaling effect, while hardware decisions shape the footprint over years.

Effects of the battery passport on companies

The battery passport encourages companies to modernize their data, production and supply chain processes. It makes sustainability measurable – and transforms data into a strategic asset.

Define scope and determine framework conditions

Steps:

- Definition of the organizational and technical scope of application

- Identification of all relevant AI and IT applications

- Documentation of the usage scenarios

- Use of recognized standards (GHG Protocol, ISO 14064)

Result:

A clearly defined carbon accounting scope that determines which AI models, data centers, applications and activities are included in the emissions assessment.

Creating transparency about actual consumption

Steps:

- Collection of usage data (requests, tokens, runtimes)

- For on-premise systems: measurement of power consumption, ideally in real time

- Collection of architecture-relevant data: Model architecture, data centers, hardware, location

- The use of the standardized OpenFootprint data model supports interoperability and scalability

Result:

A complete, standardized data set on energy, usage and infrastructure – as the basis for a precise and auditable emissions calculation.

Calculate emissions in a traceable and audit-proof manner

Steps:

- Emissions calculation based on consumption, location and infrastructure parameters

- Integration of external or existing emission calculators

- Storage of all values in the data model

- Ensuring auditability

Result:

A valid, auditable carbon footprint for every AI application – including energy consumption, Scope 2/3 data and model-accurate emission values.

Making results visible and leveraging efficiency potential

Steps:

- Reporting of energy & usage costs, model profiles and footprint data

- Compliant ESG/CSRD reporting

- Visualization of optimization potential

- Display of emissions per request or model

- Comparison of models in terms of efficiency

Result:

Complete reporting and a concrete optimization plan that lowers energy costs, reduces CO₂ emissions and demonstrably increases the efficiency of your AI landscape.

Your experts for the AI Carbon Footprint

Two scenarios - overview of potential savings in the area of AI carbon footprint for SMEs & enterprises

Scenario A - Medium-sized organization

(Example: AI for automation, service, reporting, image analysis)

Typical initial situation

- Few, but permanently running models

- No optimization

- Over-provisioning in cloud/GPU resources

- No energy or emissions monitoring

- 3 computing nodes à 1 kW during 11,500 hours; 384 g CO₂e/kWh

~35 MWh/year; 13 t CO₂ eq

Savings potential

- Up to 50 % lower cloud & compute costs

- noticeable CO₂ and energy savings of 6 t CO₂e through transfer to the EU

- Shorter running times & more efficient models

- Fast ROI thanks to quick-win optimizations of €8k/year through 25% token optimization

Why is it particularly effective?

Because energy consumption is usually concentrated on a few, clearly identifiable AI systems. Small optimizations therefore quickly show noticeable effects.

Scenario B - Large company / Enterprise

(Example: variety of models: Generative AI, NLP, vision, recommendation, forecasting, chatbots)

Typical initial situation

- developed and fine-tuned own machine learning model

- Distributed computing capacities

- High energy, cost and water load

- >400 MWh/year AI compute consumption (≈ 160 t CO₂ eq

Savings potential

- Savings of 60 k€/year

- Massive CO₂ reduction through location optimization amounting to 50 t CO₂e

- Significant reduction in water consumption

- Performance improvements with lower power consumption

- Scalable, sustainable AI portfolio

Why is it particularly effective?

Because many interdependent AI models are operated that reinforce each other’s resource consumption. Optimizations therefore have a broad leverage effect.

The most important levers for reducing the AI carbon footprint

Efficient models & architecture

- smaller or specialized models

- Model routing depending on complexity

Token optimization & prompt efficiency

- Shorter, structured prompts

- Limitation of response lengths

- Caching & Retrieval Augmented Generation (RAG) optimized chunk size, overlap, relevance

- Token budgets and user training

Infrastructure, Compute & Carbon-Aware Deployment

- energy-efficient hardware

- Automatic scaling

- Location- and time-optimized workloads (carbon-aware scheduling)

Transparency, monitoring & awareness

- CO₂ per inference or per 1,000 tokens

- Display of consumption per prompt

- Dashboards & ESG integration

- clear governance rules

Impact of the AI carbon footprint on companies

Technical effects

- Higher requirements for monitoring, metrics & energy transparency

- Necessary conversion to energy-efficient model pipelines

- Integration of emission-optimized deployments

Economic impact

- Noticeable reduction in cloud and GPU costs

- Lower OPEX through optimized workloads

- Investment protection for future AI infrastructures

Regulatory effects

- Obligation to disclose emissions in the AI context

- Compliance with CSRD, ESG and EU taxonomy standards

- Increasing expectations of stakeholders for “Responsible AI”

What companies need to do now

Reducing the AI carbon footprint requires a structured approach: Collecting data, making energy consumption visible, checking the architecture, testing optimizations and establishing long-term processes.

Determine which models are particularly energy-intensive and which data pipelines generate the greatest loads.

Create transparency about energy consumption, electricity mix, CO₂ factors and water consumption.

Modernize models through compression, distillation, architecture optimization and transition to smaller models.

Shift workloads to where the electricity mix is more climate-friendly – or implement carbon-aware scheduling.

For example:

- CO₂ per inference

- Energy per workout

- emissions per customer request

emissions and energy consumption in an auditable manner.

How Ventum Consulting supports AI carbon footprint optimization

Ventum Consulting offers companies comprehensive support in the measurement, optimization and strategic integration of the AI carbon footprint. With technical expertise, proven frameworks and a scalable process model, we help to cut costs, reduce emissions and build sustainable AI architectures.

AI Carbon Footprint Assessment

Green-AI engineering & model optimization

Carbon-Aware Scheduling & Deployment

Cloud & edge optimization

ESG/CSRD reporting & KPI frameworks

End-to-end implementation & scaling

Arrange a non-binding initial consultation now

- Strategic: energy-efficient AI, sustainable architecture, cost reduction

- Safe: ESG and CSRD-compliant emissions monitoring

- Measurable: clear KPIs for electricity, CO₂ and water consumption

- Holistic: architecture, technology, governance & reporting from a single source

TISAX and ISO certification for the Munich office only

Your message

Take a look at our news

Frequently asked questions about the AI Carbon Footprint

The energy requirements of large AI models lead to high levels of waste heat, which must be dissipated through cooling. Many data centers use water-based cooling systems, which means that water consumption is directly linked to the intensity of the training and inference load. More efficient models and optimized deployment strategies also reduce water consumption.

No. The footprint is highly dependent on model size, data sets, architecture, training frequency, location of the data center and the type of inference. Large language models (LLMs) and multimodal models generate disproportionately high energy and emission loads, while smaller, specialized models often manage with a fraction of the resources.

Yes – the economic impact is clearly noticeable in most cases. Companies not only reduce energy costs and cloud expenditure, but also improve the utilization of their compute resources. In addition, a low-emission AI landscape strengthens ESG ratings, investor confidence and regulatory compliance.

Energy-efficient hardware such as TPUs, specialized GPUs or neuromorphic chips significantly reduce the carbon footprint. At the same time, architectural decisions (edge deployment vs. cloud training) influence emissions just as much as model parameters.

Through continuous measurement, energy monitoring dashboards, power mix optimization, automated scheduling and the standardization of efficient AI architectures. Companies that firmly anchor energy efficiency in MLOps pipelines ensure long-term performance, compliance and cost benefits.

Large voice, vision and multimodal models are particularly energy-intensive, as they contain many parameters and have to process large amounts of data. Models that run continuously in real time – such as chatbots, recommendation systems or automated analyses – also consume a lot of energy, as their inference load is continuously high.